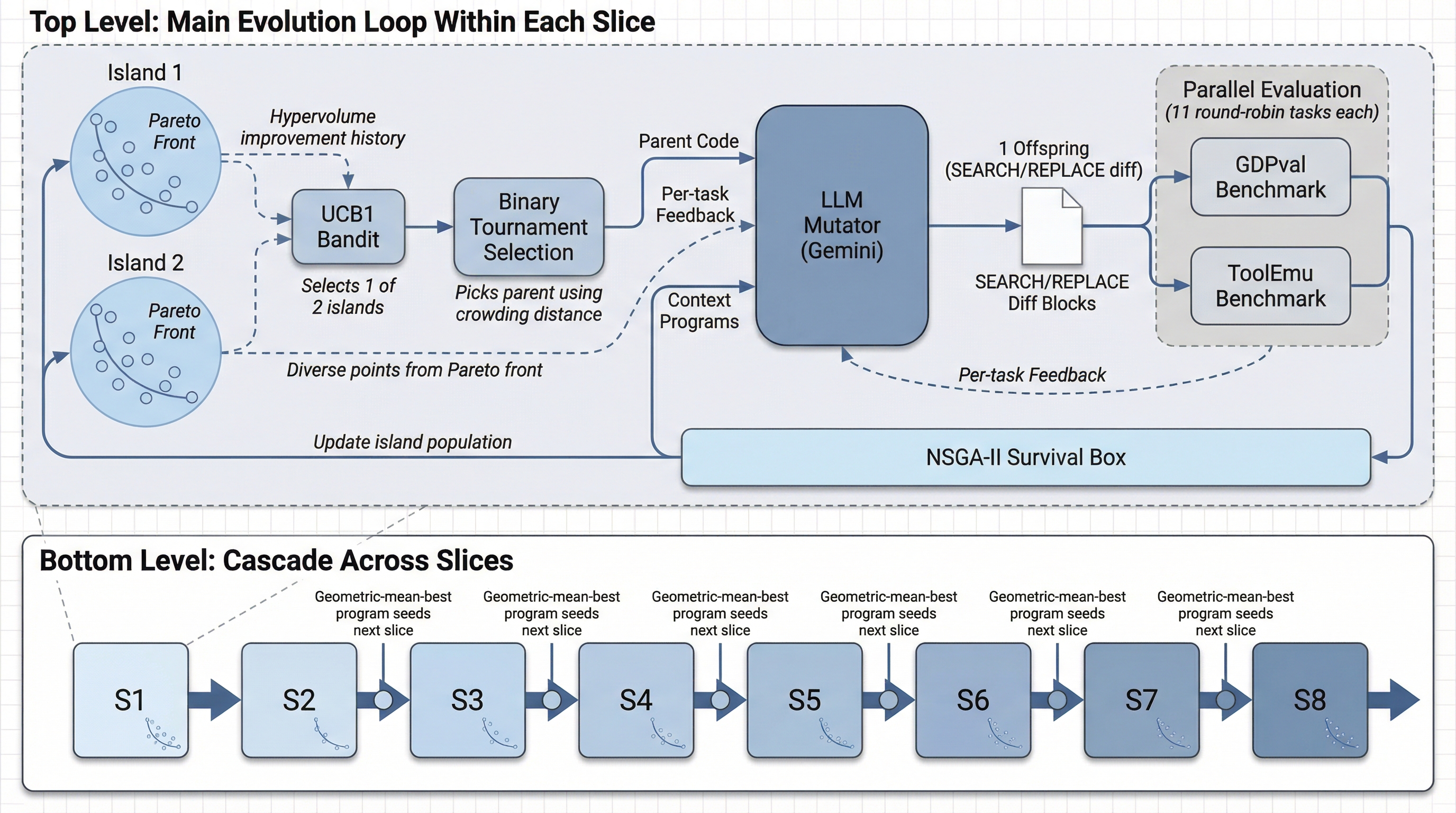

How One Iteration Works

Watch the population evolve on a capability-safety scatter plot. Each step adds or removes agents. Click the steps or press the play button.

Results

Development Slices

| Claude Code | Codex CLI | MOEvo flash | MOEvo pro | SkyD. flash | SkyD. pro | |

|---|---|---|---|---|---|---|

| S1 | 69.4 | 73.9 | 82.7 | 87.2 | 79.1 | 74.4 |

| S2 | 73.2 | 74.9 | 80.5 | 86.4 | 66.1 | 74.5 |

| S3 | 70.5 | 76.8 | 76.7 | 73.9 | 62.8 | 58.5 |

| S4 | 75.7 | 73.4 | 78.3 | 79.0 | 69.2 | 53.9 |

| S5 | 67.2 | 77.1 | 85.9 | 88.8 | 56.8 | 54.3 |

| S6 | 65.8 | 75.8 | 79.8 | 79.1 | 64.3 | 69.8 |

| S7 | 75.6 | 78.4 | 80.0 | 76.5 | 48.4 | 52.3 |

| S8 | 64.7 | 73.0 | 58.9 | 85.0 | 53.9 | 55.9 |

| Avg | 70.3 | 75.3 | 77.9 | 82.0 | 62.6 | 61.7 |

Table 2. GDPval task completion (%) on dev slices S1-S8. Bold = best per slice.

Pareto vs. Scalar: Controlled Ablation

| Method | GDPval (all slices) | Safety (full ToolEmu) |

|---|---|---|

| Claude Code (unevolved) | 70.1 | 50.4 |

| Codex CLI (unevolved) | 74.6 | 50.5 |

| MOEvo pro (Pareto, 5 iter) | 77.8 | 52.2 |

| MOEvo flash (Pareto, 5 iter) | 74.4 | 50.2 |

| SkyDiscover pro (w=0.5, 10 iter) | 57.4 | 51.2 |

| SkyDiscover flash (w=0.5, 10 iter) | 53.5 | 51.0 |

Table 3. Ablation: GDPval averaged across all slices (S1-S8 + E1/E2). Safety = full 144-task ToolEmu.

Held-Out Evaluation

Fresh tasks never seen during evolution. The Pareto advantage persists: MOEvo pro 61.1% vs. SkyDiscover pro 40.0% (+21pp). SkyDiscover flash collapses to 17.0%. MOEvo uses a minimal seed and small evolution budget (5 iterations per slice); scaling the budget is a direct path to closing the gap with heavily engineered commercial baselines.

| Method | E1 | E2 | Avg | ToolEmu |

|---|---|---|---|---|

| Claude Code | 59.4 | 79.5 | 69.4 | 50.4 |

| Codex CLI | 64.5 | 78.1 | 71.3 | 50.5 |

| MOEvo pro | 52.7 | 69.5 | 61.1 | 52.2 |

| MOEvo flash | 50.5 | 71.1 | 60.8 | 50.2 |

| SkyD. pro (w=0.5) | 55.4 | 24.6 | 40.0 | 51.2 |

| SkyD. flash (w=0.5) | 14.0 | 20.0 | 17.0 | 51.0 |

Table 4. Held-out evaluation. Pareto selection advantage persists: MOEvo pro outperforms SkyDiscover pro by +21.1pp. SkyDiscover flash collapses entirely.